Difference between revisions of "Error analysis"

(→Measurement quality) |

(→Measurement quality) |

||

| Line 18: | Line 18: | ||

Precision quantifies the variability in a measurement. Because nearly all measurements involve error sources that introduce random variation, repeated measurements of the same quantity ''Q'' rarely give identical values. ''Random errors'' are unrepeatable fluctuations that reduce precision. Observational errors ''E'' that result from random errors can be modeled as random variables with average μ = 0 and standard deviation σ. | Precision quantifies the variability in a measurement. Because nearly all measurements involve error sources that introduce random variation, repeated measurements of the same quantity ''Q'' rarely give identical values. ''Random errors'' are unrepeatable fluctuations that reduce precision. Observational errors ''E'' that result from random errors can be modeled as random variables with average μ = 0 and standard deviation σ. | ||

| − | Accuracy specifies how far a measured value is from the true value. Common error sources that affect accuracy are offset error (also called zero-point error), sensitivity error (also called percentage error), and nonlinearity. ''Systematic errors'' are repeatable errors that reduce accuracy. Observational errors from systematic errors have a constant value (in the case of an offset error) or are deterministic functions of ''Q''. For example, sensitivity error | + | Accuracy specifies how far a measured value ''M'' is from the true value ''Q''. Common error sources that affect accuracy are offset error (also called zero-point error), sensitivity error (also called percentage error), and nonlinearity. ''Systematic errors'' are repeatable errors that reduce accuracy. Observational errors ''E'' resulting from systematic errors have a constant value (in the case of an offset error) or are deterministic functions of ''Q''. For example, sensitivity error gives rise to observational error ''E'' = ''K'' ''Q''. |

| + | |||

| + | Most measurements have a combination of random and systematic errors. | ||

==Averaging== | ==Averaging== | ||

Revision as of 04:52, 27 February 2014

Overview

A thorough, correct, and precise discussion of experimental errors is the core of a superior lab report, and of life in general. This page will help you understand and clearly communicate the causes and consequences of experimental error.

What is experimental error?

The goal of a measurement is to determine an unknown physical quantity Q. The measurement procedure you use will produce a measured value M that in general differs from Q by some amount E. Experimental error, E, is the difference between the true value Q and the value you measure M, E = Q - M.

“Experimental error” is not a synonym for “experimental mistake,” although mistakes you make during the experiment can certainly result in errors.

Sources of error

Error sources are root causes of experimental errors. The error in a measurement E is equal to the sum of errors caused by all sources. Some examples of error sources are: thermal noise, interference from other pieces of equipment, and miscalibrated instruments. Error sources fall into three categories: fundamental, technical, and illegitimate. (Inherent is a synonym for fundamental.) Fundamental error sources are physical phenomena that place an absolute lower limit on experimental error. They cannot be reduced. Technical error sources can (at least in theory) be reduced by improving the instrumentation or measurement procedure — a proposition that frequently involves spending money. Illegitimate errors are mistakes made by the experimenter that affect the results. There is no excuse for those. An ideal measurement is limited by fundamental error sources only.

Measurement quality

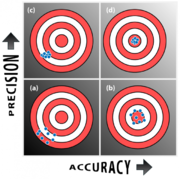

Measurements are characterized by their precision and accuracy.

Precision quantifies the variability in a measurement. Because nearly all measurements involve error sources that introduce random variation, repeated measurements of the same quantity Q rarely give identical values. Random errors are unrepeatable fluctuations that reduce precision. Observational errors E that result from random errors can be modeled as random variables with average μ = 0 and standard deviation σ.

Accuracy specifies how far a measured value M is from the true value Q. Common error sources that affect accuracy are offset error (also called zero-point error), sensitivity error (also called percentage error), and nonlinearity. Systematic errors are repeatable errors that reduce accuracy. Observational errors E resulting from systematic errors have a constant value (in the case of an offset error) or are deterministic functions of Q. For example, sensitivity error gives rise to observational error E = K Q.

Most measurements have a combination of random and systematic errors.

Averaging

One way to refine a measurement that exhibits random variation is to average N measurements:

- $ M=\frac{1}{N}\sum_{i=1}^{N}{M_i}=Q-\frac{1}{N}\sum_{i=1}^{N}{E_i} $

where Mi is the ith measurement and Ei is the ith measurement error, Ei = Q - Mi. If the measurement errors are really random, the error terms Ei will tend to cancel each other. Averaging several measurements increases the precision of the result at the cost of decreased measurement bandwidth. In other words, precision comes at the expense of a longer measurement.

Standard deviation is a common metric for specifying the variability of a single measurement. When you measure Q with an instrument that has an approximately Gaussian random error with standard deviation σ,

The central limit theorem offers a mathematical model to explain what happens when you average multiple measurements. Informally stated, the central limit theorem says that when you average N measurements that come from a distribution with average of μ and standard deviation σ, the result will have an average value of μ and a standard deviation of √N. In other words, averaging N measurements increases the precision of a measurement by a factor of √N in many situations.

add random variables, their variances add. So if you add N random variables with the same variance σ2, the variance of the result will be Nσ2. The theorem also says that if N is large enough, the distribution of the sum will be approximately Gaussian almost no matter what the underlying distribution of the variables is.

According to the central limit theorem, the uncertainty in your estimate of Q in most cases decreases in proportion to the square root of the number of measurements you average, N. Averaging multiple measurements increases the precision of a measurement Because the increase in precision is proportional to the square root of N, averaging multiple measurements is frequently a resource intensive way to achieve precision. You have to average one hundred measurements to get a single additional significant digit in your result. The central limit theorem is your frenemy. The theorem offers an elegant model of the benefit of averaging multiple measurements. But it is also could have been called the Inherent Law of Diminishing Returns of the Universe. Each time you repeat a measurement, the value added by your hard work diminishes.

Classifying errors

Classify error sources based on the way they affect the measurement. In order to come up with the correct classification, you must think each source all the way through the system: how does the underlying physical phenomenon manifest itself in the final measurement? For example, many measurements are limited by random thermal fluctuations in the sample. It is possible to reduce thermal noise by cooling the experiment. Physicists cooled the pentacene molecules shown at right to 4°C in order to image them so majestically with an atomic force microscope. But not all measurements can be undertaken at such low temperatures. Intact biological samples do not fare particularly well at 4°C. Thus, thermal noise could be considered a technical source in one instance (pentacene) and a fundamental source in another (most measurements of living biological samples). There is no hard and fast rule for classifying error sources. Consider each source carefully.

The specification sheet for the analog thermometer says that it may have an offset error of up to two degrees. Offset error means that all measurements differ from their true value by the same amount. (This is also called zero-point error.) In other words, E = Koffset. Koffset is unknown to you and can take on any value between ±2°. Assume you don't have a temperature standard you can use to find the value of Koffset.

Types of errors: random and systematic errors

Imagine you are conducting an experiment that requires you to swallow a large, orange polka dotted pill and take your temperature every day for a month. You have two instruments available: an analog thermometer and a digital thermometer. Both thermometers came with detailed specifications.

The manufacturer's website for the digital thermometer says that it has 0°C offset, but noise in its amplifier causes the reading to vary randomly around the true value. The variation has an approximately Gaussian distribution with an average value of 0°C and a standard deviation of 2°C. The analog thermometer's specification states that it has a standard deviation of 0°C.

In the context of observational errors, the terms precise and accurate have specific meanings. The analog thermometer is precise. The digital thermometer is accurate.

Which thermometer should you use?

It depends.

The raw temperature data from the digital and analog thermometers could be used in a variety of ways. It is easy to imagine experimental hypotheses that involve the average, change in, or variance in your temperature. The two thermometers result in different kinds of errors in each of the three circumstances.

Some error sources result in measurement errors that do not decrease when multiple measurements are averaged. These are called systematic errors. An example of a possible systematic error source is a mass measurement using a scale that reads five pounds too light all the time. This is called a zero-point or offset error. Systematic errors reduce the accuracy of a measurement.

Bottom line: the magnitude of random errors tends to decrease with larger N; the magnitude of systematic errors does not.

If you are measuring your body mass index, which is equal to your mass in kilograms divided by your height in meters squared, your result M will be smaller than the true value Q. Your result will also include random variation from other sources. Averaging multiple measurements will reduce the contribution of random errors, but the measured value of BMI will still be too low. No amount of averaging will correct the problem.

Types of errors

Systematic errors affect accuracy. Random errors effect precision.

Sample bias

Quantization error

Accuracy and precision

Experimenters usually worry about two types of error in measurements: random variation and systematic bias.

References

- ↑ Gross, et. al The Chemical Structure of a Molecule Resolved by Atomic Force Microscopy. Science 28 August 2009. DOI: 10.1126/science.1176210.